Why measuring the wrong metrics can hinder your organisational goals

When the UK Government introduced NHS reform plans in the early 2000s, they imagined that better measurement would help modernise delivery and achieve better patient outcomes. High on their agenda was reducing lengthy A&E wait times. A target was set: “by 2004, no-one should be waiting more than 4 hours in A&E from arrival to admission, transfer or discharge.” But before long, ambulances were spotted lining up outside hospital entrances, having been encouraged to wait outside in order to delay the start of the 4 hour countdown.

Similar cases have been seen across sectors, from ‘teaching to the test’ in education to ‘skewing’ police efforts towards ‘easier-to-solve’ crimes under the ‘targets and terror’ regime. Two decades on, these consequences have become a cautionary tale for the risks of target-setting and ‘metric fixation’ in public management.

Such unintended consequences are a reminder of the challenges any leader must confront when considering how to measure impact. Many organisations claim to be data-driven, but unless they can choose and measure the right metrics, data can become meaningless or, even worse, can detract efforts away from organisational goals.

Here are 6 principles leaders should consider when thinking about measurement.

1. Choosing metrics means deciding what matters to your organisation, and what doesn’t

Implicit in any measurement framework is a set of messages about the values and practices of an organisation. Metrics (in various forms such as targets, KPIs, performance matrixes, or evaluation frameworks) not only demonstrate organisational intent, they steer performance. Effectively, they set the ‘rules of the game’, exercising dual ‘signalling’ and ‘discipling’ functions (that is to say, showing what the organisation values, and steering efforts and accountability towards achieving these goals).

When governments or other large organisations set metrics, there’s often a catalytic function at the ecosystem level too. For instance, mission-oriented policies use deliberately bold, ambitious and time-bound targets to stimulate bottom-up innovation across sectors, actors and disciplines.

But deciding on metrics requires tough trade-offs. If you measure too many things then the ‘rules of the game’ become unclear. And, if nobody knows how to play the game, their behaviour won’t change and metrics become useless. Ask yourself: what really matters most to your organisation (and therefore is worth measuring) and what is less important? For example, an organisation with a mission to improve digital public service delivery must choose whether to focus efforts on quantifying the number of services accessed as a result of its interventions, getting feedback from users, or collecting data on second and third order impacts of service usage such as economic benefit or social inclusion.

2. Measuring the wrong things can steer action away from your goals

Well-selected metrics can produce positive incentives which steer activities towards achieving goals. Inversely, directing efforts towards activities that don’t matter detracts focus from those that do - in other words, ‘hitting the target and missing the point.’ And this comes at a cost: common consequences include ‘goal displacement’ wherein efforts are directed towards measurable activities at the expense of meaningful change, ‘myopia’ where short-term results are prioritised over long-term progress, or ‘target decoupling’ which involves divergence between day-to-day organisational realities and reported metrics. The latter can be especially harmful by giving decision-makers a false picture of organisational activities, obscuring their ability to make good decisions.

Furthermore, dynamics commonly found in large organisations, such as siloed working and risk-aversion, tend to be amplified by metrics and targets which incentivise staff to pursue low-risk outputs to which they are directly accountable (as opposed to more experimentative, long-term or collaborative approaches which will not produce guaranteed or measurable results).

3. What matters most can be hardest to measure

Measuring what really matters most is easier said than done. For public and third sector leaders, choosing clear and focussed metrics can be especially challenging while balancing considerations across multiple constituent groups and value frameworks. This can lead to ambiguous, undefined goals (‘goal ambiguity’) and, in some cases, excessive indicators and over-auditing.

On the other extreme, overly narrow goals can obscure what is most important. For example, if an organisation’s mission is to promote economic participation by providing digital services, measuring service response time and access requests will only paint part of the picture.

Be cautious of the tendency to focus on ‘easy-to-measure’ indicators (usually where data is easy to decipher, readily available, and reflects change quickly) at the expense of tacit, long-term measures. For most organisations, the latter is vital to gauge progress against the broader impact you seek to have. Invest efforts in finding creative ways to monitor this impact and overcome evidence gaps. For instance, you may want to use proxy indicators (used to measure unobservable outcomes) and combine multiple indicators (helpful for triangulating results and capturing multi-dimensional impacts). Often, combining methods can help you build a better understanding of complex, tacit impacts. For instance, running a large-scale landscape analysis of digital service provision alongside a set of more detailed case studies will help you understand how these services are experienced and used in practice.

4. Get clear on the ‘north star’ of why you want to measure impact

Too often, measurement - often referred to in organisations as Monitoring, Learning and Evaluation (MLE) - becomes a ‘cottage industry’, disconnected from organisational realities. Contrary to its goals, this can result in reduced organisational visibility, misleading data points, and unnecessary administrative burdens.

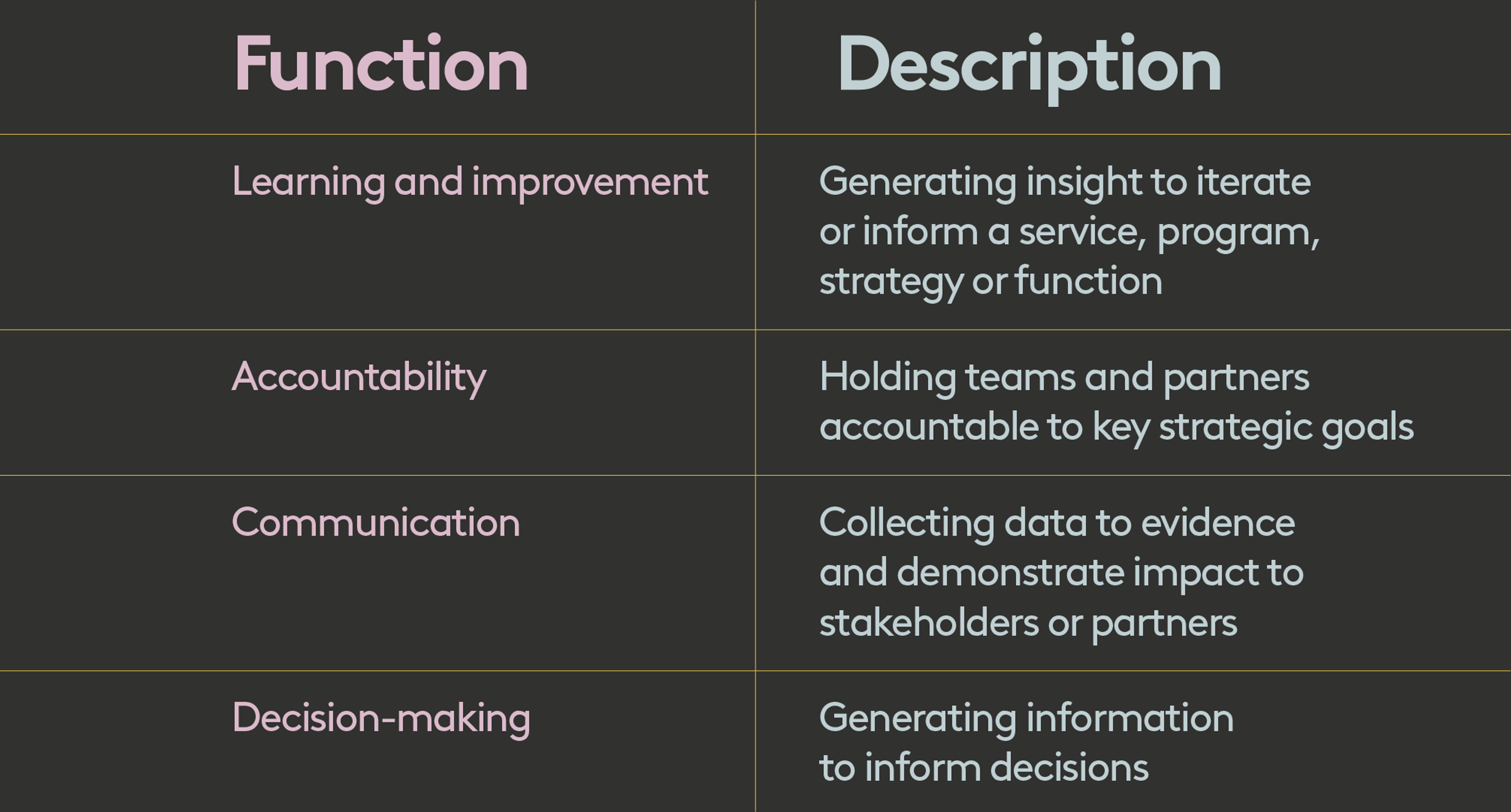

At the beginning of any measurement initiative, start by asking yourself and your teams why you want to measure impact and, in particular, what outcome(s) or problem(s) it will help you address. Usually, the answer will reveal that you are pursuing multiple functions (below are some of the most common).

If you find you’re pursuing multiple functions, try to distinguish primary versus secondary functions. This will help you make choices later down the line.

5. Use your ‘north star’ to prioritise and maintain focus, balancing both pragmatism and rigour

Measuring impact takes up time and resources, from designing frameworks through to collecting data and analysing results. A common pitfall is to underestimate the work involved in translating data into meaningful action, resulting in high volumes of data without the capacity to use it. After all, the value of data is in its application, not its existence. Getting clear on the why of measuring impact should provide you with a ‘north star’ to guide where to place your efforts.

Firstly, it guides decisions on what to measure. For example, collecting data on user complaints may help you improve a service (number 1 in the table above) but will not solicit the best data points for convincing leaders to commit funding to your service (number 3). Secondly, focussing on why you’re measuring impact will steer decisions on how to measure - essentially, the methods and data collection approaches you will use.

When it comes to methods, it is key to hold pragmatism alongside rigour. For example, if your team is looking for quick, actionable insights to inform service iteration, you may opt for rapid user feedback over the deeper insights that a Randomised Control Trial or longitudinal study could provide. Always revisit the why as data emerges and question whether you are getting the insights you need. On a structural level, embedding measurement functions in teams - rather than in separated, siloed units - helps maintain alignment between the strategic purpose of measurement (the ‘north star’) and how it is operationalised day-to-day.

6. Finally, articulate and challenge your assumptions - and recognise measurement as an imperfect tool

Measurement forces you to make assumptions. This is because developing frameworks for measuring impact (such as Theories of Change, results frameworks or logframes) and selecting metrics to measure (such as KPIs, operational metrics or performance targets) involves front-loading information or making predictions about how outcomes will develop. Unless you make these assumptions explicit, you’re at risk of entrenching untested logic, generating false certainty, and creating narrow or biased systems.

To mitigate against this, measurement frameworks should have principles of reflection and adaptability baked in by design. Some simple tips include documenting assumptions early and revisiting these at regular intervals, setting aside space to document unanticipated impacts, and empowering staff (or even bringing in external facilitators) to challenge results and help iterate systems.

The aura of objectivity associated with data, especially quantitative data, can make it hard to question metrics. Positioning measurement as an imperfect learning tool can help solicit meaningful, critical engagement from teams. With the right conditions, measurement can be a powerful means of interrogating assumptions, so use it to test whether your organisation’s strategies and activities are in fact generating the outcomes you expected.

Information can be power, but only if it’s the right kind

When writing on the history of ‘targets and terror’ in UK public management, scholars Bevan and Hood stated that ‘what’s measured is what matters.’ They were right that choosing to measure an output or outcome signals significance and incentivises efforts to achieve it.

However, the phrase does not capture the agency of organisations to determine which metrics to measure based on the strategic goals and practices they want to promote. Nor does it emphasise the importance of using data effectively to inform decision-making and steer activities across organisations. The key question is how organisations can identify and measure the metrics that matter most, generate meaningful data, and use that data to direct progress and drive impact.

At Public Digital, we’ve helped organisations to define and quantify their impact goals, developing measurement systems and using insights to learn and iterate.

If this blog has prompted thoughts or you’d like to discuss ways to measure impact in your organisation, reach out to anna.goulden@public.digital.

Written by

Anna Goulden

Principal Consultant